Introduction: The Question That Started It All

In recent years, we have come across artificial intelligence everywhere, from chatbots to search engines and everyday tools. It may feel like a recent development, but the history of artificial intelligence goes much further back and began with a simple question: can a machine think?

To build a strong foundation in AI, explore our complete guide: AI Fundamentals: A Beginner’s Guide to Artificial Intelligence

In the early days, intelligence was understood as the ability to solve problems using logic and predefined rules. Computers followed instructions and performed calculations, but they could not learn or adapt. Over time, this view began to shift as researchers developed systems that could recognize patterns, learn from data, and improve their performance.

This journey spans more than 70 years, from the early experiments of the 1940s to the powerful AI systems we see today. In this article, we explore how artificial intelligence evolved through different stages and became part of everyday life.

Prefer watching instead? You can explore the full story of AI in this video:

Table of Contents

The Mechanical Awakening (1940–1956)

Early Computers and Logical Machines

In the 1940s, computers were very different from what we use today. Machines like Colossus and ENIAC were large, expensive, and filled entire rooms. They were built to perform calculations and follow instructions. While they could not think, they demonstrated that machines could process logic and solve problems at a speed far beyond humans.

The First Artificial Neuron

In 1943, neuroscientist Warren McCulloch and mathematician Walter Pitts introduced one of the earliest ideas behind artificial intelligence in their paper “A Logical Calculus of the Ideas Immanent in Nervous Activity.” They proposed a simple mathematical model of a neuron that could take inputs and produce an output. It showed that intelligence could be represented through computation, laying the groundwork for neural networks.

The Turing Test

In 1950, British mathematician and computer scientist Alan Turing published his paper “Computing Machinery and Intelligence.” Instead of defining intelligence directly, he focused on how it could be recognized. He proposed what is now known as the Turing Test, where a machine is considered intelligent if it can behave like a human in conversation.

At its core, the Turing Test asks a simple question: if a human cannot reliably tell whether they are interacting with a machine or another person, can the machine be considered intelligent?

This idea shifted AI research toward observable behavior rather than abstract definitions of intelligence.

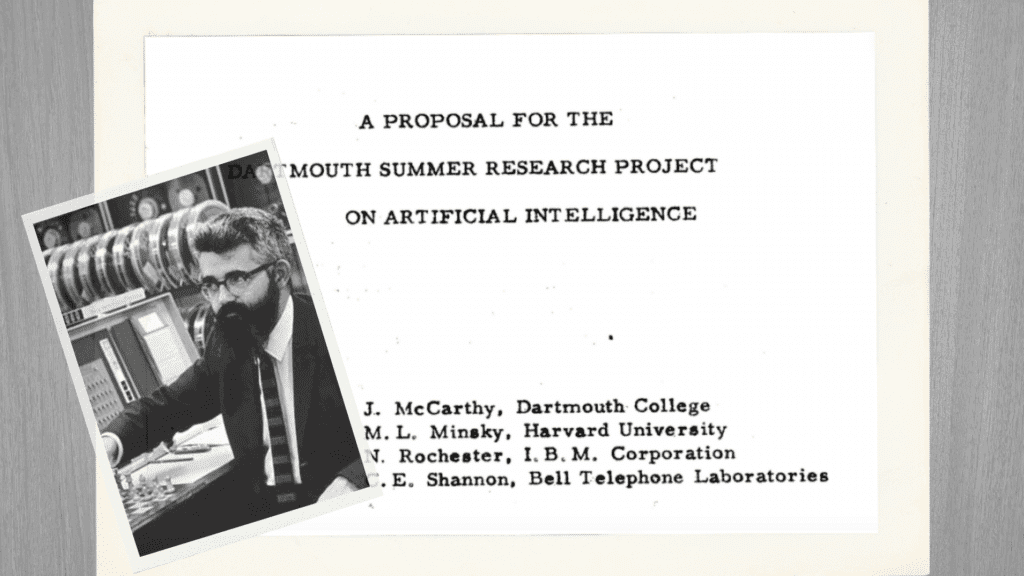

Birth of Artificial Intelligence

In 1956, a group of researchers, including computer scientist John McCarthy, gathered at Dartmouth College for a summer workshop. The proposal, titled “A Proposal for the Dartmouth Summer Research Project on Artificial Intelligence,” formally introduced the term artificial intelligence. This event established AI as a scientific field and set the direction for future research.

The Rule-Based Intelligence Era (1957–1973)

The Perceptron and Early Learning

In 1957, psychologist Frank Rosenblatt introduced the Perceptron, one of the first models capable of learning from data. Unlike earlier machines that followed fixed instructions, it could adjust its behavior based on examples. It could recognize simple patterns like shapes or signals, showing that machines could begin to learn rather than just execute commands.

LISP and Symbolic AI

In 1958, computer scientist John McCarthy developed LISP, a programming language designed specifically for artificial intelligence. It allowed researchers to build systems using symbols and logical rules instead of just numbers.

This approach, known as symbolic AI, treated intelligence as structured knowledge that could be written and manipulated. For years, it became the dominant direction in AI research.

ELIZA and Early Chatbots

In 1966, computer scientist Joseph Weizenbaum created ELIZA, one of the earliest chatbot programs. It worked by matching patterns in user input and generating pre-written responses.

There was no real understanding behind it. Yet, for many people, it felt like a real conversation.

Limits of Rule-Based Systems

Despite early progress, rule-based systems struggled outside controlled environments. Every possible situation had to be defined in advance, making them difficult to scale. As problems became more complex, this approach began to break down, showing that intelligence could not rely on rules alone.

The AI Winters (1974–1993)

The First AI Winter

By the early 1970s, progress in artificial intelligence had slowed. Many early promises were not fulfilled, and systems struggled outside controlled environments. As a result, funding from governments and institutions began to decline.

This period became known as the first AI winter, a time when interest and investment in AI dropped significantly.

Rise of Expert Systems

In the late 1970s and early 1980s, AI saw a temporary revival with the rise of expert systems. These programs were designed to capture human knowledge using rules and apply it to specific domains like medicine and business.

For a time, this approach showed real-world success. Companies began adopting these systems, and it seemed like AI had finally found a practical path forward.

Backpropagation Breakthrough

In 1986, researchers Geoffrey Hinton, David Rumelhart, and Ronald Williams introduced backpropagation in their paper “Learning Representations by Back-Propagating Errors.” It provided a practical way for neural networks to learn by adjusting internal parameters based on data, rather than relying only on predefined rules.

At the time, its impact was not immediate. But this idea later became a key foundation in the history of artificial intelligence, especially in the rise of modern deep learning.

The Second AI Winter

Despite the progress, expert systems proved difficult to maintain and expensive to scale. They worked well in limited cases but often failed in more complex situations.

By the late 1980s, investment declined again, leading to the second AI winter. Research slowed, but important ideas continued to develop quietly in the background, preparing the way for the next phase of AI.

The Rise of Machine Learning (1994–2011)

Learning from Data

After years of limited progress, a new direction began to shape the field. Instead of writing rules for every situation, researchers started building systems that could learn from data. This shift toward data-driven models became the foundation of machine learning and marked an important phase in the history of artificial intelligence.

To explore machine learning concepts further, check out our detailed guide: Machine Learning for Beginners: A Simple Guide

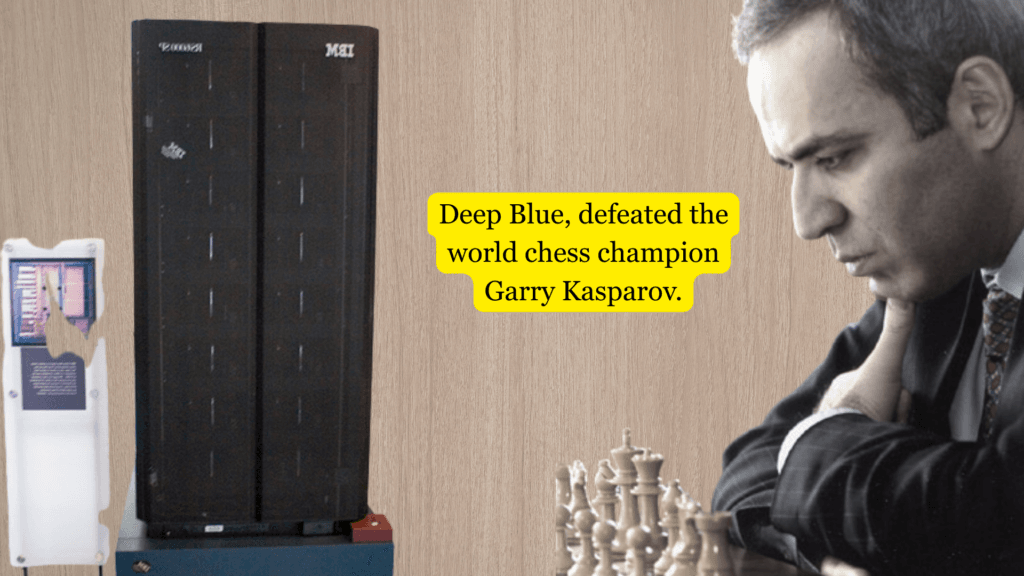

Deep Blue vs Garry Kasparov

In 1997, the world witnessed a historic moment. IBM’s Deep Blue defeated Garry Kasparov, the reigning world chess champion.

For the first time, a machine had outperformed a human at a highly complex intellectual game. It showed that AI could achieve exceptional performance in specific tasks.

Statistical Machine Learning

In the 2000s, statistical machine learning became widely used. Instead of relying on fixed rules, algorithms learned patterns, probabilities, and relationships from large datasets. This made AI more practical and scalable.

Applications began to appear in search engines, recommendation systems, and predictive models, bringing AI closer to everyday use.

Deep Learning Revival

In 2006, computer scientist Geoffrey Hinton and his collaborators introduced a new approach in their paper “A Fast Learning Algorithm for Deep Belief Nets.” This work revived interest in neural networks by showing how deeper models could be trained more effectively.

Although progress was gradual at first, it marked the beginning of modern deep learning, which would soon transform the field.

ImageNet and Data Revolution

In 2009, computer scientist Fei-Fei Li introduced ImageNet, a large-scale dataset of labeled images through the paper “ImageNet: A Large-Scale Hierarchical Image Database.” For the first time, researchers had access to enough data to train more powerful models effectively.

This combination of large datasets and improved algorithms pushed AI forward, setting the stage for major breakthroughs in the years that followed.

The Deep Learning Breakthrough (2012–2016)

AlexNet and Image Recognition

In 2012, a major breakthrough changed the direction of artificial intelligence. In their paper “ImageNet Classification with Deep Convolutional Neural Networks,” researchers Alex Krizhevsky, Ilya Sutskever, and Geoffrey Hinton introduced a powerful deep neural network that later became known as AlexNet.

It achieved a dramatic improvement in image recognition accuracy, outperforming traditional methods by a large margin. For the first time, deep learning proved it could solve complex real-world problems at scale.

Generative Adversarial Networks

In 2014, researcher Ian Goodfellow and his colleagues introduced Generative Adversarial Networks (GANs) in the paper “Generative Adversarial Nets.” Unlike earlier systems that focused on analyzing data, GANs could generate new data.

Two networks, a generator and a discriminator, were trained together in a competitive process. The result was the ability to create realistic images, patterns, and later even videos. This opened a new direction where AI was not just understanding the world, but also creating it.

AlphaGo Defeats Lee Sedol

In 2016, a historic moment captured global attention. AlphaGo, developed by DeepMind, defeated Lee Sedol, one of the world’s best Go players.

DeepMind was founded by Demis Hassabis and his colleagues, and was later acquired by Google. The team focused on combining deep learning with reinforcement learning to solve complex problems. AlphaGo learned by playing millions of games against itself, gradually improving its strategy.

It did not just copy human moves. It discovered new ones. This event showed that AI could handle problems once considered too complex for machines, marking a turning point in the history of artificial intelligence.

The New Foundation of AI (2017–2021)

Transformers and Attention

In 2017, researchers at Google introduced a new model architecture in their paper “Attention Is All You Need.” This work presented the Transformer, a model that changed how machines process language.

Instead of reading text step by step, the Transformer could understand relationships between words across an entire sentence at once. This led to significant improvements in language understanding and became the foundation of most modern AI systems.

AlphaGo Zero and Self-Learning

Around the same time, DeepMind introduced AlphaGo Zero, a system that learned in a completely different way. Instead of relying on human data, it started from scratch and learned entirely by playing against itself.

Through repeated self-play, it improved rapidly and reached a high level of performance. This showed that powerful AI systems could be built without human examples, relying only on experience and learning.

GPT Models and Language AI

From 2018 to 2020, OpenAI introduced a new family of models known as GPT (Generative Pre-trained Transformer). Starting with GPT-1 and later GPT-2 and GPT-3, these models were trained on large amounts of text to understand and generate language.

They could write, answer questions, and perform a wide range of tasks with minimal task-specific training. This marked a major shift in the history of artificial intelligence, where AI systems became more general, flexible, and widely applicable.

The Public Awakening of AI (2022–2024)

ChatGPT and Mass Adoption

In 2022, artificial intelligence became widely accessible to the public with the release of ChatGPT. For the first time, people could interact with AI in a simple, conversational way. They could ask questions, write content, generate ideas, and even code.

The response was immediate. ChatGPT reached one million users in just five days and crossed 100 million users within two months, becoming one of the fastest-growing consumer applications in history. This marked a major shift in the history of artificial intelligence, as AI moved from research labs into everyday life.

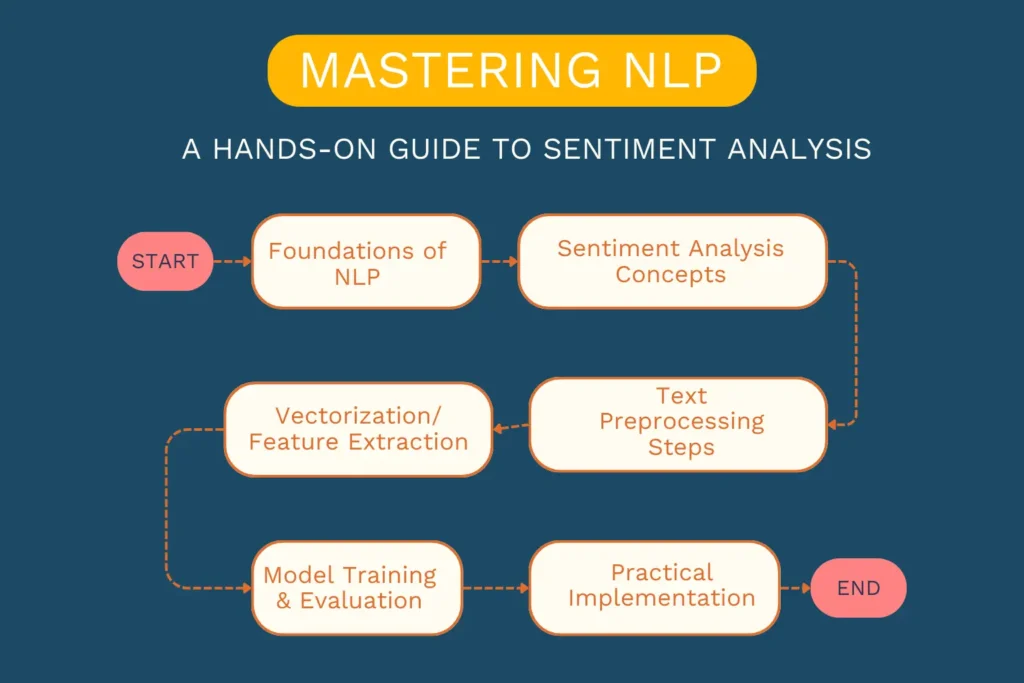

To understand NLP techniques in practice, check out our detailed guide: Mastering NLP: A Journey Through Sentiment Analysis

Global AI Competition

As AI adoption grew, competition intensified among major technology companies. Google introduced Bard, later rebranded as Gemini, Meta released LLaMA, and Anthropic developed Claude.

Each organization focused on building more capable and useful AI systems. This competition accelerated innovation and pushed AI development forward at a rapid pace.

Scientific Recognition

By 2024, the impact of AI was recognized at the highest level of science. John Hopfield and Geoffrey Hinton were awarded the Nobel Prize in Physics for their foundational contributions to neural networks. Hopfield introduced early models of associative memory, while Hinton advanced learning methods that became central to modern deep learning.

In the same year, the Nobel Prize in Chemistry was awarded to Demis Hassabis and John Jumper of DeepMind for their work on AlphaFold, which used AI to predict protein structures. Alongside them, David Baker was recognized for his contributions to computational protein design.

These recognitions showed that artificial intelligence was not only transforming technology, but also advancing fundamental science.

The Autonomous Age (2025–Present)

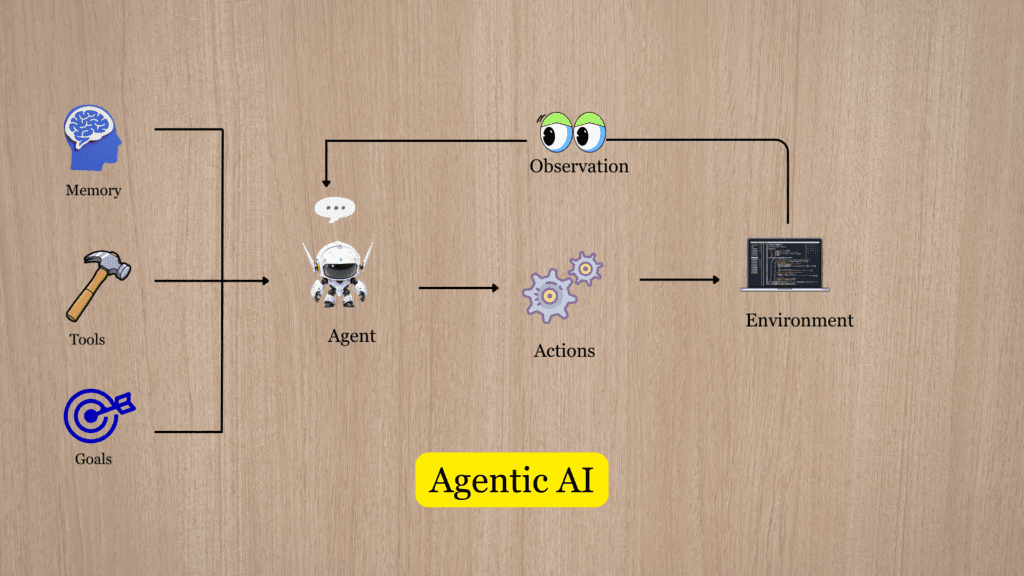

Rise of Agentic AI

By 2025, artificial intelligence began moving beyond simple responses. A new type of system, often called agentic AI, started to emerge. These systems can plan tasks, use tools, and take actions to achieve goals.

Instead of waiting for step-by-step instructions, they can break down problems and work through them independently. This marks a shift from AI as a tool to AI as an active system.

AI as a Decision-Making Partner

As AI systems improved, they began supporting real decisions across different fields. In software development, they help write and debug code. In research, they assist in generating ideas and analyzing complex problems. In healthcare, they support diagnosis and treatment planning.

At the same time, new models from both Western and global players are shaping this space. OpenAI continues to advance general-purpose AI systems, while companies like Google, Anthropic, and Meta are building increasingly capable models. In parallel, Chinese open-source models such as DeepSeek and Alibaba’s Qwen are expanding access and pushing innovation in new directions.

This marks a new phase in the history of artificial intelligence, where AI works alongside humans as a decision-making partner rather than just a passive assistant.

Invisible AI Infrastructure

Today, AI is becoming part of everyday technology. It is integrated into apps, search engines, communication tools, and operating systems. Many people now use AI without even noticing it.

Like electricity or the internet, AI is becoming an invisible layer that powers modern systems. What once felt experimental is now part of daily life, quietly shaping how we work, learn, and interact.

Final Thoughts: The Question Still Remains

The history of artificial intelligence shows a clear transformation over time. What began as simple machines following rules has evolved into systems that can learn, adapt, and make decisions. Each stage, from early logic-based models to modern AI systems, has shaped what we see today.

Artificial intelligence is now part of everyday life. It powers search engines, communication tools, healthcare systems, and many other technologies. In many cases, people interact with AI without even realizing it. It has become a core layer of modern technology and continues to grow in importance.

Yet, the original question still remains: can a machine truly think? Today, the answer is no longer just theoretical. AI can perform tasks that once required human intelligence. But its full potential is still unfolding, and the future of artificial intelligence is only beginning.

Frequently Asked Questions About Artificial Intelligence

When did the history of artificial intelligence start?

The history of artificial intelligence began in the 1940s with early computers, but it officially became a field in 1956 at the Dartmouth conference, where the term artificial intelligence was introduced.

How has the history of artificial intelligence evolved over time?

The history of artificial intelligence has evolved from simple rule-based systems to machine learning and deep learning models. Over time, AI systems became more capable of learning from data and solving complex problems.

What is artificial intelligence?

Artificial intelligence is the ability of machines to perform tasks that normally require human intelligence. This includes learning from data, recognizing patterns, understanding language, and making decisions.

What is the difference between machine learning and deep learning in artificial intelligence?

Machine learning is a broad field within artificial intelligence where algorithms learn from data. Deep learning is a part of machine learning that uses multi-layered neural networks to learn more complex patterns.

Why did the history of artificial intelligence take so long to develop?

The history of artificial intelligence developed slowly because it required large amounts of data, powerful computing systems, and advanced algorithms. These became available at scale only in recent years, allowing rapid progress.